Physical rendering is heavy, And I'm not talking about problems with the Earth's gravitational pull. I'm talking about processing power, and time needed to create a beautiful rendering on a single node. It often puts me in a position of choosing between fast and noisy, or slow and mostly artifact free. One way to aleviate the pain is to use a renderfarm, like Sheep It, but even then, they can impose things like 20 minute time limits.

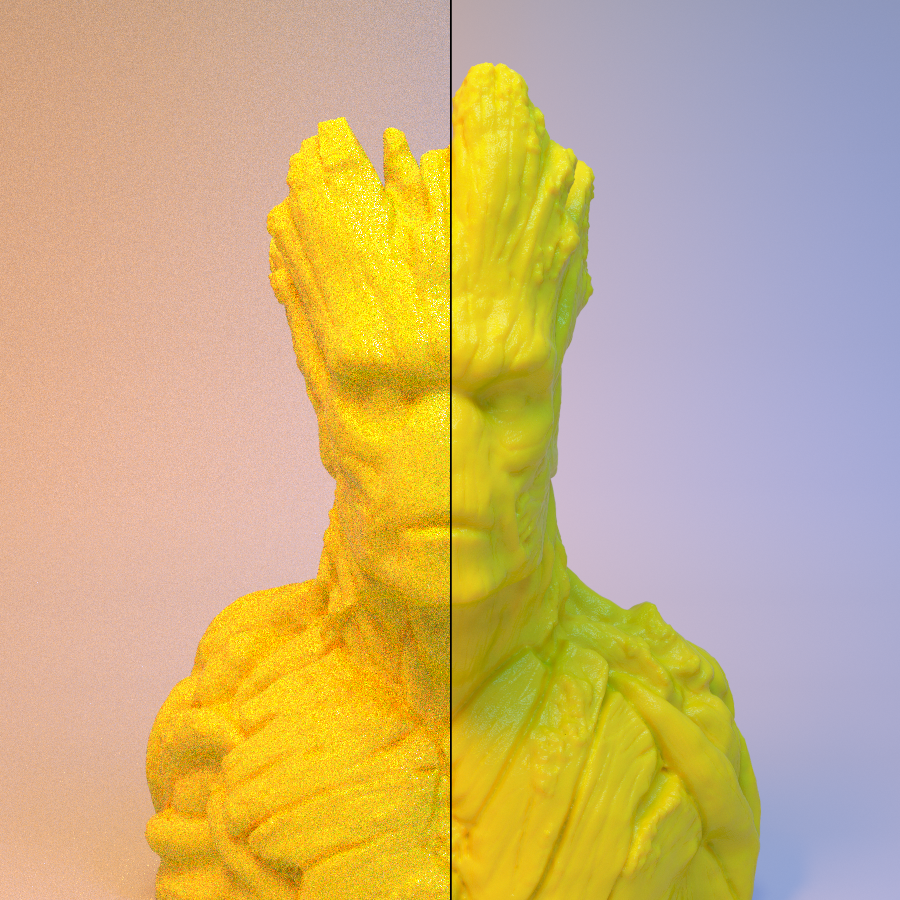

The solution I've found is image averaging. Rendering an image with a randomized seed produces images with unique noise patterns. If you average the data of every pixel across many images, it will even out the noise, and given enough frames, you can get a pretty good looking image.

I wrote a proof of concept command line application called Imgavg (Check it out on github) to test the effectiveness of my theory. In my tests, using a renderfarm and Imgavg resulted in as much as a 14x speedup over rendering on my machine alone. Images available below for results. I found it also works entertainingly well with completely random images, as long as all the images are the same dimensions.

Left: 1 frame, 40 samples. Right: 250 merged frames, 40 samples each.

Left: 1 frame, 40 samples. Right: 250 merged frames, 40 samples each.

Credit for the groot bust goes to Doodle_Monkey on Thingiverse.